For most of writing history, research meant time. You sat in libraries, made phone calls, mailed letters, read books that were themselves the product of years of reading other books. The accumulation was the point — the research didn't just inform the writing, it shaped the mind doing the writing. Something happened in the twenty minutes you spent waiting for a microfilm spool that you can't replicate in a search result, and we should be honest with ourselves about what's lost when that is compressed away.

That said: the compression has arrived. All four of the major AI tools writers are likely to use now offer dedicated research modes that do something qualitatively different from the standard chat interface. They browse the web autonomously, read dozens or hundreds of sources, and produce a structured report with citations while you go do something else. The reports take anywhere from a few minutes to nearly an hour. They are often surprisingly good. And they raise a set of questions particular to writers — not to analysts or product managers, the people these tools were largely built for.

This post covers what each tool does, how they differ, why they sometimes get things badly wrong, and what writers specifically can and can't use them for.

What "Deep Research" Actually Means

In a regular AI conversation, you ask a question and the model draws on what it learned during training. The answer is fast but limited to what the model already knows — a cutoff date, a training scope shaped by decisions made well before you sat down to write.

Deep Research changes this by making the AI an active agent. You give it a question. It breaks that question into sub-questions, goes to the web, reads actual pages and PDFs, synthesizes what it finds, and returns a report with citations you can follow. It is closer to "send an intern to the library for an afternoon" than "ask a knowledgeable friend." The intern can cover ground fast. Like any intern, they can also come back with confidently stated errors.

Here is what each of the four tools offers.

ChatGPT's Deep Research is the most explicitly "analyst" of the group — built for structured, comprehensive reports of the kind a consultant would produce. It breaks your query into sub-questions, browses multiple sources, and assembles a cited report that can run many pages. It shows its reasoning steps as it runs and allows you to interrupt mid-research to redirect. You can restrict it to specific websites or domains, which is useful if you want to limit research to particular publications or source types.

For a writer, the most useful features are source control and structured output. Reports include inline citations with links, section headers, and occasionally data visualizations. Coverage depth is impressive on broad, well-documented topics. On niche, contested, or primarily oral topics, it is more likely to produce plausible-sounding gaps. Available on paid plans; a lighter version is available to free users.

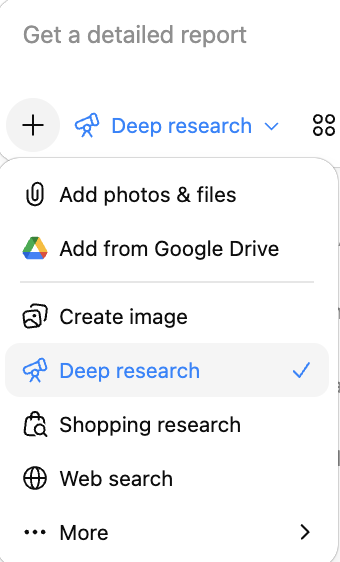

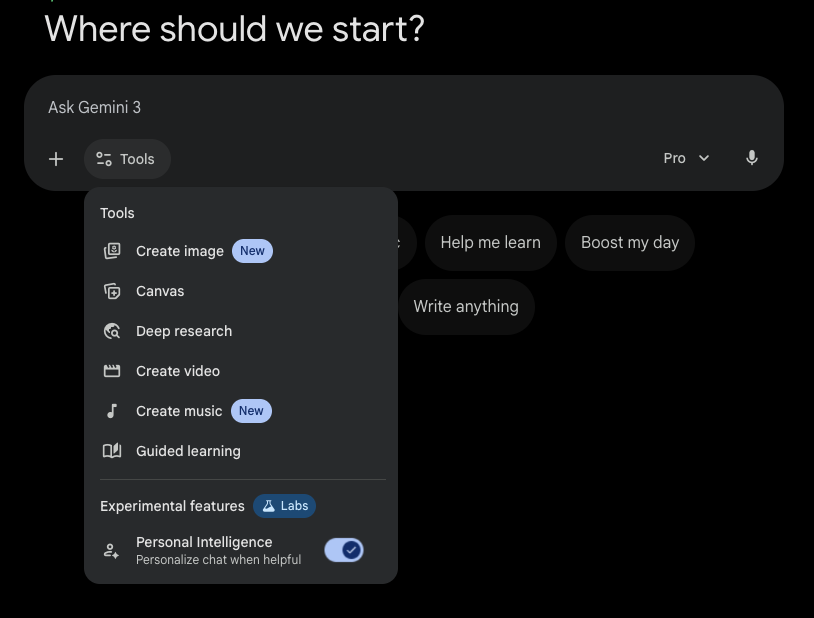

Gemini Deep Research is the best-integrated of the group if you live inside Google's ecosystem. It can pull from your Google Drive, Gmail, and Docs in addition to the open web — for writers using Google Docs as their primary workspace, this is genuinely useful. You can feed it your own drafts, notes, and reference files and let it synthesize across all of them alongside external sources. Reports export directly to Google Docs, which is the smoothest output-to-draft pipeline of the four tools.

It shows you its research plan before it begins — you can review and modify it before the first search runs. A lighter version is available to free users. For writers already deep in Google's ecosystem, this is the version most likely to fit naturally into an existing workflow.

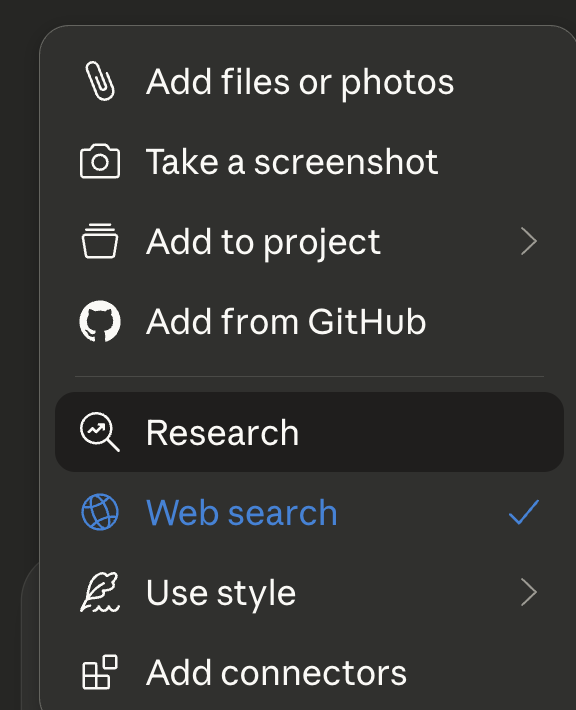

Claude's version — called simply Research, without "Deep" — positions itself somewhat differently. It breaks your question into components, gathers sources, and produces a cited report, but the character of the output tends toward synthesis and nuanced reasoning rather than maximum coverage. Where ChatGPT's reports can feel like analyst briefs, Claude's tend to feel more like a thoughtful summary with a point of view — which is often exactly what a writer needs from background research.

Claude Research can incorporate uploaded documents alongside web sources and connects to external services via integrations. The relevant advantage for writers is the same quality that makes Claude useful in revision sessions: it is more likely to acknowledge uncertainty, flag where sources disagree, and note the limits of what it found. Available on paid plans.

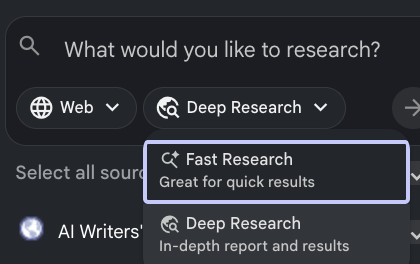

NotebookLM works differently from the other three and is worth understanding on its own terms. While ChatGPT, Gemini, and Claude Research all start from the open web, NotebookLM starts from your sources. You upload documents — PDFs, articles, notes, transcripts, Google Docs — and it builds a knowledge base from them. Its answers are grounded in what you've given it, not in what it can find online.

It offers two research modes: Fast Research synthesizes quickly across your uploaded sources; Deep Research reasons more extensively across them. The key distinction for writers is that NotebookLM is unlikely to hallucinate facts that weren't in your source material — it will tell you when it can't find something rather than inventing a plausible answer. The tradeoff is that it cannot discover new material. It is an excellent tool for the phase when you've done the gathering and need the synthesis. Its Audio Overview feature — which turns a set of sources into a conversational summary — is also a surprisingly useful way to quickly absorb a body of material. Available with a free tier; NotebookLM Plus offers expanded limits.

What Writers Can Actually Use This For

The three web-based tools were built with the analyst in mind. They work best for questions that have answers findable on the open web and that benefit from breadth. NotebookLM is the exception: it works best once you've already done the gathering.

| The need | What it looks like | ChatGPT | Gemini | Claude | NbkLM |

|---|---|---|---|---|---|

| Period & setting | What did a 1940s Chicago jazz club actually look, sound, smell like? | ◉◉◉ | ◉◉◎ | ◉◉◎ | ◎◎◎ |

| Technical accuracy | Your character is a cardiologist. What would she say and know? | ◉◉◉ | ◉◉◉ | ◉◉◉ | ◉◉◉† |

| Synthesizing your notes | You have thirty tabs and six notebooks. Help. | ◉◉◎ | ◉◉◉ | ◉◉◎ | ◉◉◉ |

| Contested history | A real event with disputed accounts or moral complexity. | ◉◉◎ | ◉◉◎ | ◉◉◉ | ◉◉◎† |

| World-building science | The real physics underlying your speculative premise. | ◉◉◉ | ◉◉◉ | ◉◉◎ | ◎◎◎ |

| Culture you don't know | The texture of a community you're writing about from outside. | ◉◎◎ | ◉◎◎ | ◉◎◎ | ◎◎◎ |

◉◉◉ = strong fit ◉◉◎ = useful ◉◎◎ = proceed carefully ◎◎◎ = wrong tool † = only with relevant sources uploaded

The last row deserves more than a rating. These tools are excellent at describing cultures from the outside — they will give you a thorough, organized overview of a community you don't belong to. What they cannot give you is interiority. The experience of being inside a culture, the texture of its humor and its silences, the things that don't get written down — none of that is on the web in a form these tools can find. For that research, you need people.

Why These Tools Get Things Wrong

Every AI research tool will eventually hand you a report containing a confident error. Some are small and catchable — a wrong date, a misattributed quote. Others are structural: a synthesis built on a misread source, or a claim grounded in nothing at all. Understanding the specific ways these tools fail is not optional for a writer who intends to use them responsibly.

Every website can include a file called robots.txt that tells automated crawlers — including AI research agents — which pages they are and aren't allowed to access. Many publishers, newspapers, academic journals, archives, and specialized databases have either partially or fully blocked AI crawlers. The AI doesn't flag when a source is blocked. It simply can't read it.

The consequence: when you ask about a topic where the best sources have blocked access, the AI substitutes whatever it can reach — secondary sources, aggregators, SEO-optimized summaries, or older cached material. The report looks comprehensive. The citations are just the ones that happened to leave the door open, not the ones that are most authoritative. For writers researching anything where primary sources are behind paywalls or in restricted archives — literary criticism, medical research, legal records, newspaper archives — this is a significant and largely invisible problem.

What to do: Read the citations critically. If the report cites aggregators and summaries rather than primary sources, that's a signal the best material wasn't accessible. Use the tools' source-restriction features to specify preferred domains, and assume that paywalled or institutional sources are largely unavailable to the agent.

Deep Research tools synthesize across many sources simultaneously. When those sources represent different eras, different national perspectives, or different sides of a scholarly debate, the synthesis can flatten those differences into a single coherent-sounding narrative that none of the individual sources would actually endorse.

A nineteenth-century primary account of an event might be stitched together with a twenty-first-century revisionist history and a Wikipedia summary, all presented as unified "what happened." A medical finding from a 2019 study might be blended with the more cautious conclusions of a 2024 follow-up that partially contradicted it. The report reads smoothly. The underlying incoherence is invisible.

What to do: Look at the citations as a set, not just individually. Do these sources belong in the same account? Are they from compatible eras and perspectives? For contested or evolving topics, explicitly ask the tool to keep sources separated by period or perspective rather than synthesizing them into a single narrative.

AI models are trained to produce fluent, helpful text. That training creates a systematic pressure against saying "I don't know" or "I couldn't find this." When a research question touches on something underdocumented — the daily experience of ordinary people in a historical period, the texture of a place before it was widely written about — the model may generate plausible-sounding details rather than acknowledging the gap.

These invented details are the most dangerous kind of error for writers, because they are exactly the kind of specific, textured information that makes fiction feel real. A fabricated detail about what a particular neighborhood smelled like in 1932 is not obviously wrong the way a fabricated date is. It sounds like something a researcher found. You put it in the book. A reader who knows the neighborhood finds it.

What to do: Be especially skeptical of specific sensory details, quotations, and statistics on underdocumented topics. Ask the tool explicitly: "Are you confident this is documented, or are you inferring?" A well-designed tool should acknowledge when it's extrapolating. If it doesn't, treat the detail as unverified until you can confirm it elsewhere.

Deep Research tools read the open web, which has a particular shape: a lot of marketing content, a lot of SEO-optimized summaries, a lot of confident second-hand claims amplified through repetition, and relatively little slow, careful primary scholarship. The AI doesn't reliably distinguish between a peer-reviewed paper and a well-ranking blog post that summarizes it — sometimes incorrectly.

This creates a systematic bias toward consensus and popular accounts. Minority perspectives, recent revisions to established accounts, and topics that are actively debated in academic literature but settled in popular coverage will tend to be underrepresented or misrepresented.

What to do: Use source-restriction features to prioritize reputable domains where possible. After reading a report on any topic with historical or scholarly complexity, ask: "What would a skeptic or revisionist say about this account?" That follow-up question reliably surfaces the parts of the topic the initial sweep flattened.

Always follow the citations for any claim that matters to your story. Not spot-check — follow. Open the source. Read the relevant passage. Confirm it says what the report claims. This is not counsel of paranoia. It is the minimum standard of verification that has always applied to research. What AI has changed is how easy it is to skip this step because the report looks so complete.

A wrong date in a historical novel is a different kind of failure than a wrong date in a news article, but it is still a failure. And the readers who know the period will find it.

Four Prompts for Writers — One for Each Tool

These prompts are built to get the most from each tool's particular strengths. Adjust the subject matter to your project, but keep the framing — it's doing important work in each case.

The Things These Tools Cannot Do

These tools are excellent at the horizontal sweep — broad coverage, many sources, synthesized quickly. They are much less good at vertical depth: the insight that comes from following one thread a very long way, the serendipitous discovery that happens when you're looking for something else. And they are essentially useless for research that doesn't exist in published form.

A medical report about a procedure is not the same as sitting with someone who has had it. A historical account of a migration is not the same as reading the letters that migrants wrote. A profile of a profession is not the same as a conversation with someone who hates their job and has never told anyone why. These tools give you the report. The letter, the conversation, the afternoon in the archive — that's still on you.

AI & THE CRAFT — TOOLS FOR WRITERS